A NAS is a wonderful beast: you can use it to create your private cloud to store, backup and share your files, as a DLNA media server for smart TVs, as a download station for a variety of different contents and much, much more. True, you can configure your RasPI to do the same job, but appliance solutions are just… Ready to use! In the hard task to pick-up one for my house, my choice, eventually, has gone to Synology DS214play.

A NAS is a wonderful beast: you can use it to create your private cloud to store, backup and share your files, as a DLNA media server for smart TVs, as a download station for a variety of different contents and much, much more. True, you can configure your RasPI to do the same job, but appliance solutions are just… Ready to use! In the hard task to pick-up one for my house, my choice, eventually, has gone to Synology DS214play.

Why? Because Synology is a very well known manufacturer of corporate NAS solutions and the software used in the high end products is the same of consumer segment of their offer. Because they have a huge number of clients available for practically all OSes, desktop and mobile, to sync files, watch videos, listen to music, download files, acts as a web, mail, file, git server, IP cams monitoring and much, much more. Because they are easily customizable and there is a vibrant community (even in Italian) of professional and domestic users behind these products. And because the DS214play has an Intel Atom CPU, can transcode 1080p video on-the-flight, has 2 bays for HDs and has an affordable price.

Cloud store, and file sharing

First of all, the NAS can be used as Cloud Station to create a private cloud storage, while the dedicated app keep in sync your data across different machines (no matter of the OS). Once the router and a dynamic DNS service are configured, data is kept in sync also outside the LAN, so bye bye to Dropbox, Google Drive and any other public cloud services.

In alternative, it is possible to setup a classic rsync flow between a Linux/Mac and the NAS. I used the latter, and now I backup my entire desktop HD to the NAS, and the NAS can then backup its entire content (or part of it) on a cloud backup storage, like S3, Azure and others. Steps to setup rsync, ssh keys and all the whole process are explained here. If ssh connects to the NAS but rsync doesn’t work, Network Backup has to be enabled via Menu -> Backup & Replication -> Network backup destination -> Enable Network Backup.

Extending the software

Additional software could be added using a well-know packages-repositories mechanism. The official repository has some app, but the real magic starts when the SynoCommunity repo is added to the system. Git Server, Horde, ownCloud, CouchPotato, Python, Memcached, Mercurial, Headphones, SABnzbd and many more are all at a click distance now. And they run directly on the NAS, with a lower power consumption compared to a dedicated pc.

Additional software could be added using a well-know packages-repositories mechanism. The official repository has some app, but the real magic starts when the SynoCommunity repo is added to the system. Git Server, Horde, ownCloud, CouchPotato, Python, Memcached, Mercurial, Headphones, SABnzbd and many more are all at a click distance now. And they run directly on the NAS, with a lower power consumption compared to a dedicated pc.

It is also possible to create new packages with the help of spksrc, a cross compilation framework intended to compile and package softwares for Synology NAS

Manage movies, TV series and video in general

First solution is to use DS Video, the dedicated client to manage the personal collections of movies, TV series and videos. It’s available via web interface, for mobile OSes and included in some Samsung TVs. ChromeCast is supported, so is possible to browse the movie catalog from web / smartphone /tablet and watch it directly to the TV, without connecting a single cable. Obviously the video format have to be compatible with Chromecast, but it’s always possible to re-encode the video in a supported format (download on a pc, convert, copy back to the NAS).

If DS Video is not enough, Plex Media Server can be installed too, with all the goodies Plex offers :). Once the Plex package has been downloaded, a manual install in the Synology Package center is required. Before doing so, Settings -> General -> Trust Level -> Allow any publisher, otherwise the installation will fail with a “This package does not contain a digital signature” error. Plex server new versions has to be checked from time to time, because it isn’t automatically updated by the Synology Package center. Finally, Plex does not support the hardware transcoding features of the DS214play or DS415play, so up to 720p videos are transcoded, with stuttering and pausing during playback and 100% of CPU usage. But Plex interface is way better than DS Video to me, catalog recognizes a bigger number of videos, so I still prefer to use Plex for browsing and organizing my movies and anime collection.

If DS Video is not enough, Plex Media Server can be installed too, with all the goodies Plex offers :). Once the Plex package has been downloaded, a manual install in the Synology Package center is required. Before doing so, Settings -> General -> Trust Level -> Allow any publisher, otherwise the installation will fail with a “This package does not contain a digital signature” error. Plex server new versions has to be checked from time to time, because it isn’t automatically updated by the Synology Package center. Finally, Plex does not support the hardware transcoding features of the DS214play or DS415play, so up to 720p videos are transcoded, with stuttering and pausing during playback and 100% of CPU usage. But Plex interface is way better than DS Video to me, catalog recognizes a bigger number of videos, so I still prefer to use Plex for browsing and organizing my movies and anime collection.

Music and Photos

I’m not a big user of music (I’ve a Sonos system at home, all the rest is history now), but music works pretty much like movies, with a dedicated app plus some more available from the community. Photos too.

Download station

One of the area where Synology excels is the entire pipeline of scouting, grabbing, downloading, organizing and making available contents. For example, it’s possible to simply add a movie to the IMDb Watchlist and have it automatically downloaded at home, ready to be streamed to the TV via Chromecast. Or as soon as a followed TV series has a new episode aired, it is automagically downloaded on the NAS, with subtitles, ready to be viewed on XMBC, without any intervention. Amazing! :) Let’s see a potential setup for this. Please note that the following steps bring to illegal actions in different countries, so I highly discourage everyone in doing that.

One way for automagically having latest TV series episode without a single interaction, uses a RSS feed technique. On the search results page of Kickass and other services, it’s easy to find an RSS icon that points to an RSS feed with the results of the search. Every time the feed url is loaded, new results can be found inside it, if any. A specialized service, like showRSS, can create more fine-tuned feeds, with a particular file quality only etc.

The default Download Station app is used to download BT/HTTP/FTP/NZB/Rss feed/eMule files, so the RSS feed url can be added to the app, checking “Automatically download all items” option. Voila’, it works :) A drawback of this approach is that non-useful downloaded files must be manually deleted and subtitles are not downloaded.

If Kickass-like services are banned in a particular country, generally changing the NAS or router DNS and use OpenDNS (208.67.220.220) or Google Public DNS (8.8.4.4), can workaround the limitation.

A more advance setup for the download station

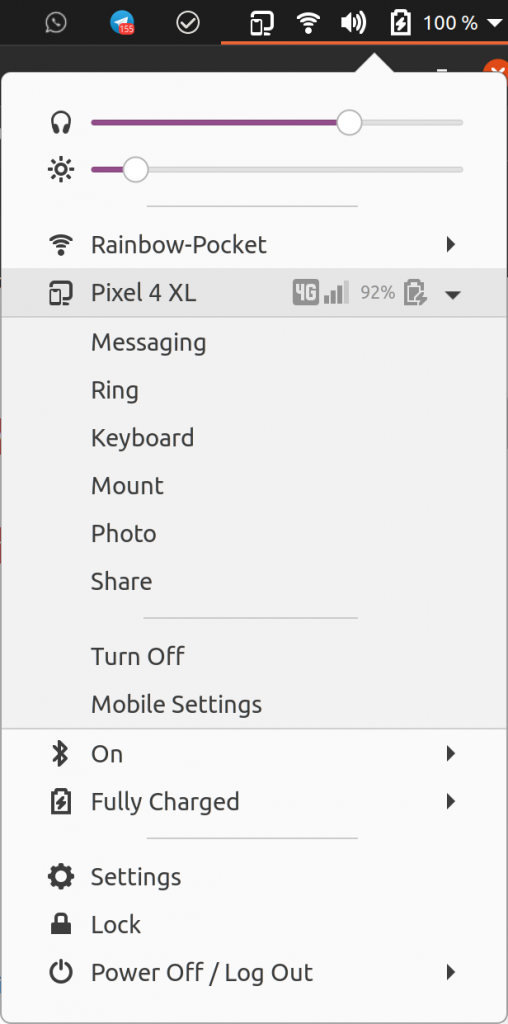

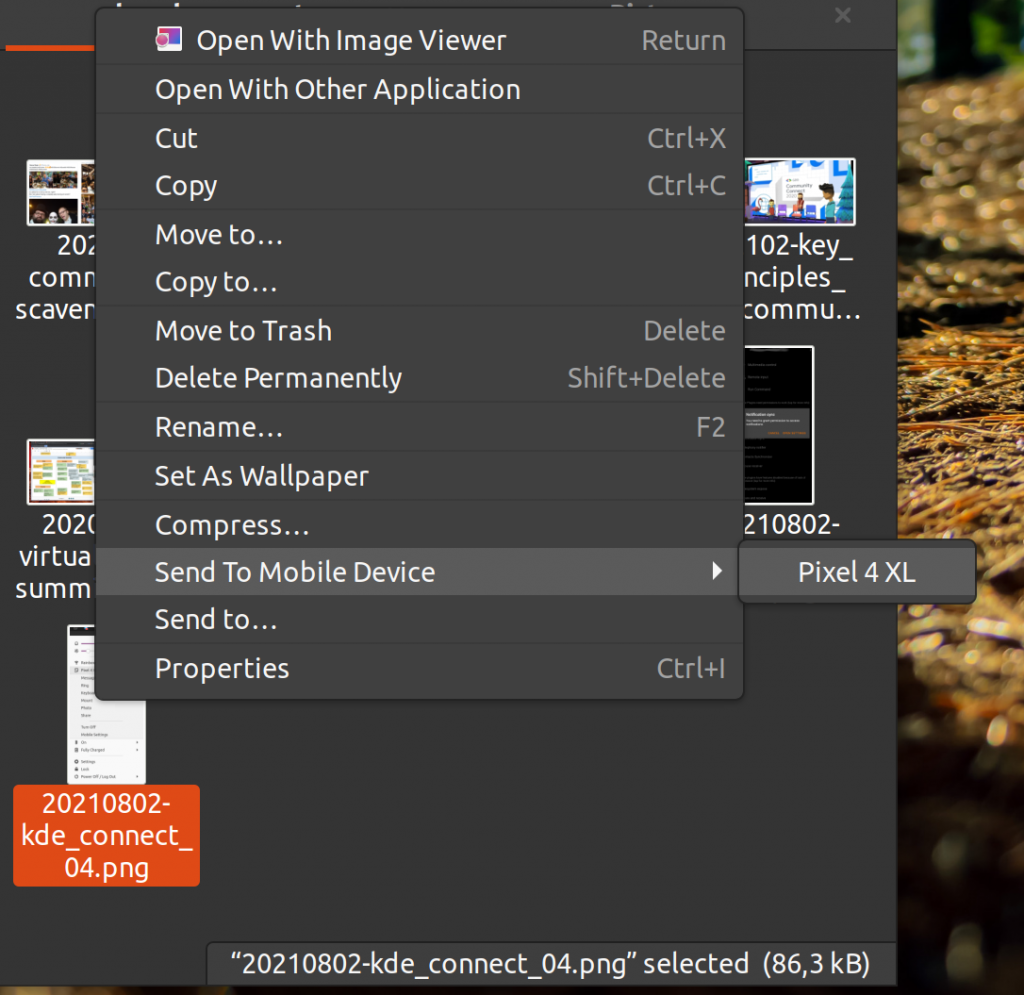

Sick Beard watches for new episodes of favorite TV shows and when they are posted, it downloads, sorts, renames, generates metadata and download subtitles, plus more. It is available as package in the SynoCommunity repo and, once installed (along with Python package), can be configured to use Download Station for downloading snatched files. SABnzbd can work too for nzb files (available in the SynoCommunity repo).

Sick Beard watches for new episodes of favorite TV shows and when they are posted, it downloads, sorts, renames, generates metadata and download subtitles, plus more. It is available as package in the SynoCommunity repo and, once installed (along with Python package), can be configured to use Download Station for downloading snatched files. SABnzbd can work too for nzb files (available in the SynoCommunity repo).

If Sick Beard is unable to communicate with Download Station directly, a Watched folder can be configured in Download Stations under Settings -> BT/HTTP/FTP/NZB -> Location, and SickBeard need to save torrent and nbz files in that folder, under Settings ->Search Settings -> NBZ or Torrent search -> Method: Black hole and selecting the same folder used before. Now Download Station will look for files in that folder, grab them as soon as they’re added by Sick Beard and start the download.

In the Settings, subtitles download can be enabled, while post-processing options are useful to rename and organize files and download metadata (for XMBC, for example) once Download Station has downloaded them.

Similar approach for movies, this time using CouchPotato. Once installed from the SynoCommunity repo (Python package required too), Settings -> Downloaders -> Black hole directory to communicate directly with the Download Station. Then CouchPotato can be configured to follow IMDb or Movies.IO watchlist, Goodfilms user queue, Rottentomatoes, IMDb, Kinepolis charts and much more.

Similar approach for movies, this time using CouchPotato. Once installed from the SynoCommunity repo (Python package required too), Settings -> Downloaders -> Black hole directory to communicate directly with the Download Station. Then CouchPotato can be configured to follow IMDb or Movies.IO watchlist, Goodfilms user queue, Rottentomatoes, IMDb, Kinepolis charts and much more.

The potential issues with the standard version of CouchPotato is that it searches only on some newsgroup services, with a limited support for torrents. To solve that, is possible to install the CouchPotato Custom package instead (and Git and Python packages) from the SynoCommunity repo and, during the first launch, specify a github repo with a customized version of CouchPotato. For example, https://github.com/RuudBurger/CouchPotatoServer.git is also able to search on ThePirateBay and KickAssTorrent services. Change DNS as specified above if required.

If ThePirateBay and KickAssTorrent are a need also for TV series, the same, “Custom” technique could be used with a fork of Sick Beard, called SickRage. Installation is pretty straightforward: first the Sick Beard Custom package (with Git and Python packages) from the SynoCommunity repo need to be installed and, during its first launch, https://github.com/echel0n/SickRage.git must be used as Fork URL and master as branch. That’s all, with more detailed instructions in the official forum. Configuration of SickRage follows the same settings of Sick Bears, but now there are a bunch of additional Search Providers to use ;)

If ThePirateBay and KickAssTorrent are a need also for TV series, the same, “Custom” technique could be used with a fork of Sick Beard, called SickRage. Installation is pretty straightforward: first the Sick Beard Custom package (with Git and Python packages) from the SynoCommunity repo need to be installed and, during its first launch, https://github.com/echel0n/SickRage.git must be used as Fork URL and master as branch. That’s all, with more detailed instructions in the official forum. Configuration of SickRage follows the same settings of Sick Bears, but now there are a bunch of additional Search Providers to use ;)

The NAS is really a great piece of hardware, and software flexibility allows to use in a variety of different way (what about hosting your WordPress blog there?). Future exploration will bring, for sure, to additional setups (I’ve in mind something for IP cams, for example), so stay tuned!

Like this:

Like Loading...

A

A  Additional software could be added using a well-know packages-repositories mechanism. The official repository has some app, but the real magic starts when the

Additional software could be added using a well-know packages-repositories mechanism. The official repository has some app, but the real magic starts when the  If DS Video is not enough,

If DS Video is not enough,  Sick Beard

Sick Beard Similar approach for movies, this time using

Similar approach for movies, this time using  If ThePirateBay and KickAssTorrent are a need also for TV series, the same, “Custom” technique could be used with a fork of Sick Beard, called

If ThePirateBay and KickAssTorrent are a need also for TV series, the same, “Custom” technique could be used with a fork of Sick Beard, called